Two employees. $65 million in profit. An FDA warning. And a security flaw a teenager could exploit.

Sam Altman predicted this would happen. He just didn’t say it would look like this.

In September 2024, a 41 year old self-taught programmer named Matthew Gallagher launched a telehealth company from his house in Los Angeles. He spent $20,000 and two months building it. He used ChatGPT, Claude, and Grok to write the code. Midjourney and Runway to generate the ads. ElevenLabs to handle customer communications. No venture capital. No co-founders. No employees.

Fourteen months later, Medvi generated $401 million in sales. Net profit margin: 16.2%. That’s $65 million in profit. With two people: Matthew and his younger brother Elliot, who he hired in April 2025 as his first and only employee.

The New York Times verified the financials.

For context: Hims and Hers, the publicly traded telehealth company doing the same thing, reported $2.4 billion in revenue last year. With 2,442 employees. And a 5.5% net profit margin. Gallagher is running nearly three times their margin with a headcount of two.

This is the story everyone is sharing right now. And most of the coverage stops here, at the inspiring part. VU doesn’t.

How Medvi AI Actually Works

Medvi sells GLP-1 weight loss drugs (the same active ingredients in Ozempic and Wegovy) through a telehealth model. You go to the website, fill out a form, get connected to a doctor, get a prescription, and the drugs ship to your house. Starting at $179 for the first month.

Gallagher didn’t build the hard part. He didn’t hire doctors. He didn’t open pharmacies. He didn’t handle shipping or regulatory compliance. He outsourced all of that to two existing platforms: CareValidate and OpenLoop Health. They provide the licensed physicians, prescription processing, pharmacy fulfillment, shipping logistics, and compliance.

Medvi owns exactly one thing: the customer relationship. Branding, website, paid media, checkout flow, and customer service. Gallagher built all of that with AI.

The architecture is deliberately thin. And that’s the point. He treated every business function as a prompt. Code, content, visuals, voice, customer service, analytics. All AI generated. All maintained by one person staring at a screen. The automation tools that connect these AI outputs into actual workflows are what make a one-person operation like this physically possible.

If the model sounds familiar, it should. Every major AI company is racing to own healthcare right now. Amazon, OpenAI, Anthropic, and Perplexity all launched health AI products in the same window. Gallagher just got there faster with fewer people and a credit card.

His previous startup, Watch Gang, had 60 employees and never turned a profit. More people meant higher costs and slower decisions. With Medvi, every potential hire had to justify itself against that experience. Almost none of them could.

The Medvi AI Stack: Every Tool He Used

ChatGPT, Claude, and Grok for writing code, website copy, and building AI agents that connected his systems together. Midjourney and Runway for ad creative. ElevenLabs for AI voice tools that communicate with customers. Custom AI agents for real time business analytics and performance monitoring.

That’s the entire team. No designers. No engineers. No marketing department. No customer service reps.

To get more specific about what each tool actually did in the stack, because “he used ChatGPT” doesn’t tell you much:

ChatGPT handled the bulk of the code generation. Gallagher described himself as a self-taught programmer, not a software engineer. ChatGPT wrote the frontend, the checkout flow, and the initial integrations with CareValidate and OpenLoop’s APIs. When something broke, he pasted the error back in and iterated.

Claude handled the more complex reasoning tasks: analyzing business metrics, drafting regulatory language, and building the AI agents that monitored performance in real time. The difference between ChatGPT and Claude in Gallagher’s workflow was basically the difference between a fast generalist and a careful specialist.

Midjourney and Runway generated all the ad creative. Every Facebook ad, every Instagram story, every video testimonial-style asset. At Medvi’s scale of spending, a traditional creative team would have cost $200K+ per year. Gallagher spent effectively zero on creative labor.

ElevenLabs handled the voice layer. Customer communications that would normally require a call center were automated with AI-generated voice. When you called Medvi, you talked to an AI. Most customers didn’t know.

300 customers in month one. 1,000 more in month two. 250,000 customers by end of 2025. On track for $1.8 billion in 2026.

If you’re reading VirtualUncle, you already know these tools. You’ve probably used most of them. The difference between Gallagher and everyone else isn’t the tools. It’s that he treated them as employees, not assistants. Every function that would normally require a person, he replaced with a prompt and a pipeline. It’s the same principle behind selling AI automations to small businesses: find the repeatable work, remove the human from the loop, charge for the output.

Medvi AI: The Part Nobody Else Is Writing About

Here’s where the “inspiring AI success story” gets complicated.

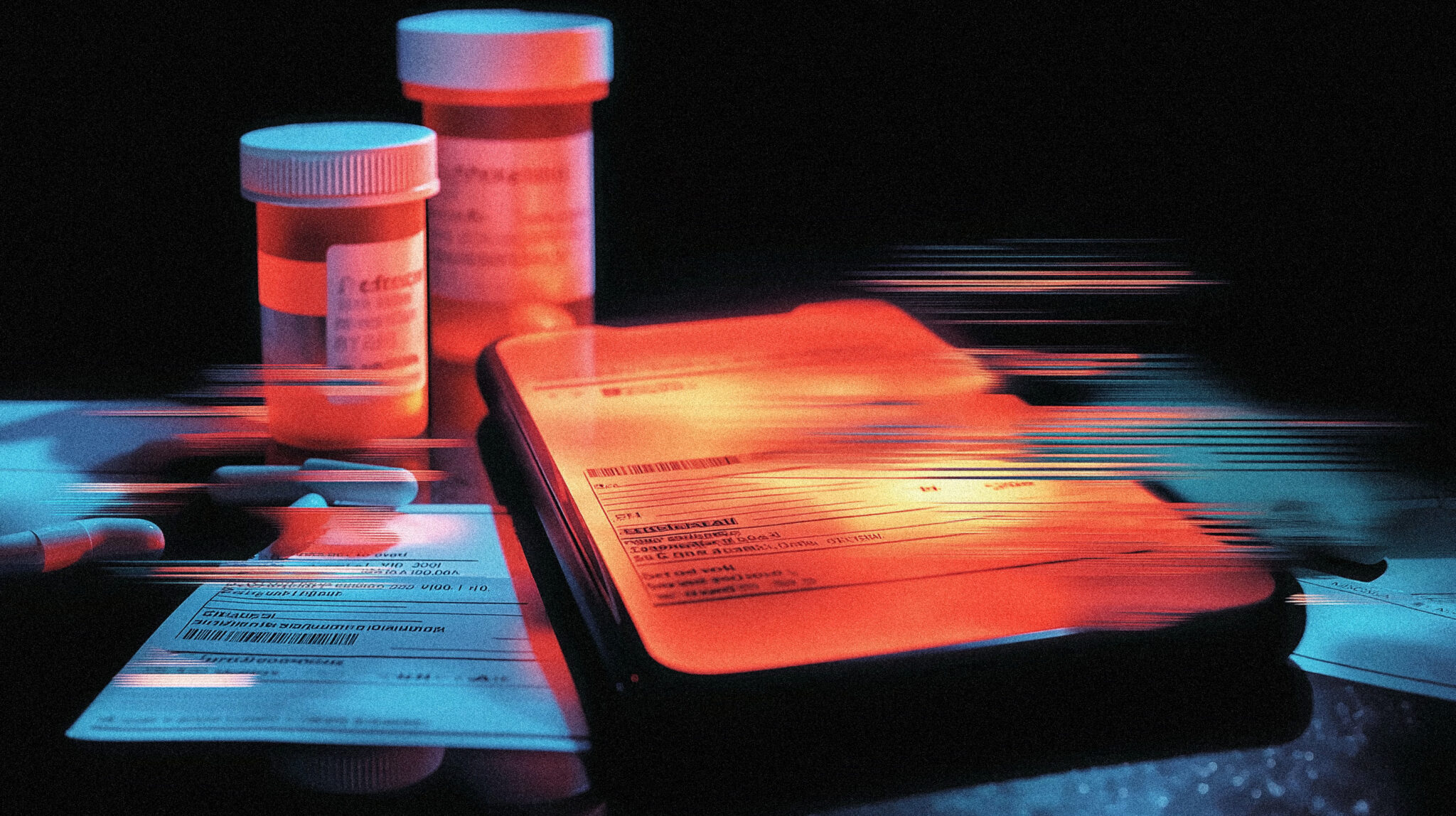

In February 2026, the FDA sent Medvi a formal warning letter. The agency found that Medvi’s website falsely implied the company was the compounder of its drugs (it isn’t) and used language suggesting FDA approval that compounded products don’t actually have. Claims like “Same active ingredient as Wegovy and Ozempic” were flagged as misleading.

Medvi wasn’t alone. The FDA issued warning letters to over 30 telehealth companies in March 2026 for similar violations. This was an industry wide crackdown, not a targeted investigation. But it raises a question: when your entire marketing operation is AI generated, who’s checking the claims?

The customer service chatbot fabricated drug prices that Gallagher then had to honor. It hallucinated product lines that didn’t exist. These aren’t theoretical risks. They’re things that actually happened. The same AI reliability concerns that make people nervous about AI prescribing medications apply here too, except Medvi’s chatbot wasn’t prescribing drugs. It was making up prices for them, which is somehow both less dangerous and more embarrassing.

Then there’s the security. A fintech founder named Caleb Bacher signed up for Medvi after seeing a Facebook ad. He noticed that his patient approval page URL ended in a sequential number. He changed the number by one digit and found himself looking at another patient’s complete record: name, email, phone, weight, goal weight, and medication order. No login required. No authentication. No session token.

A textbook IDOR vulnerability. One of the most basic, most documented security flaws in web development. The kind of thing a first year CS student learns to avoid.

When you build a $401 million company with two people and no security team, this is what happens. The speed that makes the business possible also creates the gaps that make it vulnerable.

What Medvi AI Actually Proves About AI Companies

The coverage of this story is splitting into two camps. Camp one: “This proves AI can replace entire companies.” Camp two: “This proves AI built companies are dangerous and irresponsible.”

Both are partially right and mostly missing the point.

What Gallagher actually proved is that the infrastructure layer of a business can now be rented instead of built. He didn’t create a healthcare company. He created a marketing and checkout layer on top of someone else’s healthcare company. CareValidate and OpenLoop do the regulated work. Medvi does the customer acquisition. AI handles everything in between.

That’s not a one person company in the way most people imagine it. It’s a one person distribution engine sitting on top of an existing supply chain. The “company” is really just Gallagher, a laptop, and a stack of AI tools pointed at a market where customers are desperate and willing to pay.

Which is why the moat problem matters. Medvi owns no proprietary technology. No exclusive supplier relationships. No patents. Nothing stopping someone else from building the same thing next week with the same tools. If you want to build something with a stronger foundation, AI digital products at least give you ownership of what you create. Medvi owns a checkout page and a Facebook ad budget.

The only competitive advantage is execution speed, and that advantage erodes the moment a competitor with more resources decides to copy the model.

What Medvi Means for AI Solopreneurs

Altman predicted the one person billion dollar company. What he didn’t predict is that it would also be the one person billion dollar company with an FDA warning, a security vulnerability a teenager could exploit, and an AI chatbot making up prices.

The tools work. The question is whether a two person team can handle what happens when a $1.8 billion company has a real problem and there’s nobody to call except your brother.

The Medvi story is being shared in every AI community as proof that you can build a massive company alone with AI. That’s true. It’s also incomplete.

What Gallagher actually did was identify a market with desperate demand (GLP-1 drugs have an 18-month waitlist through traditional healthcare), find infrastructure providers who handle the regulated parts (CareValidate and OpenLoop), and build a marketing and checkout layer on top with AI. The total addressable market did the heavy lifting. The AI tools did the execution.

The replicable part: using AI to replace traditionally human-dependent business functions. Customer service, ad creative, website development, analytics, and communications are all genuinely replaceable by AI tools today. Gallagher proved that at scale. That part of the playbook works for anyone building a service business, from automation consulting to AI bot building to digital products.

The non-replicable part: the specific market timing. GLP-1 drugs exploded in demand at the exact moment AI tools became capable enough to run a one-person operation. That convergence won’t happen again for this specific category. Someone building the same thing today faces 30+ competitors who all read the same NYT article.

The lesson that actually transfers: don’t try to copy Medvi. Study the architecture. One person owning the customer relationship, outsourcing the regulated infrastructure, and using AI for everything in between. That model works in dozens of industries. Telehealth just happened to be the one where the margins were absurd enough to make the story go viral.

Medvi AI FAQ: Revenue, Tools and Concerns

Medvi outsourced all regulated healthcare functions (doctors, prescriptions, pharmacy, shipping, compliance) to CareValidate and OpenLoop Health. Matthew Gallagher used ChatGPT, Claude, Grok, Midjourney, Runway, and ElevenLabs to build and run everything else: the website, ad creative, customer service, analytics, and checkout flow. He owned only the customer relationship layer while the infrastructure providers handled the rest. The New York Times verified the financials.

ChatGPT, Claude, and Grok for code and website copy. Midjourney and Runway for ad creative and video content. ElevenLabs for AI-generated voice communications with customers. Custom AI agents for real-time business analytics and performance monitoring. No human designers, engineers, marketers, or customer service representatives were employed.

Yes. In February 2026, the FDA sent Medvi a formal warning letter for falsely implying it was the compounder of its GLP-1 drugs and using language suggesting FDA approval that compounded products do not have. Medvi was one of over 30 telehealth companies that received similar warnings during an industry-wide FDA crackdown in early 2026.

Medvi had a documented IDOR security vulnerability where changing a sequential number in a patient approval page URL exposed other patients’ complete records including name, email, phone, weight, and medication orders with no authentication required. The company also had issues with its AI customer service chatbot fabricating drug prices and hallucinating product lines. These incidents reflect the risks of running a scaled operation with a two-person team and no dedicated security personnel.