Read that again.

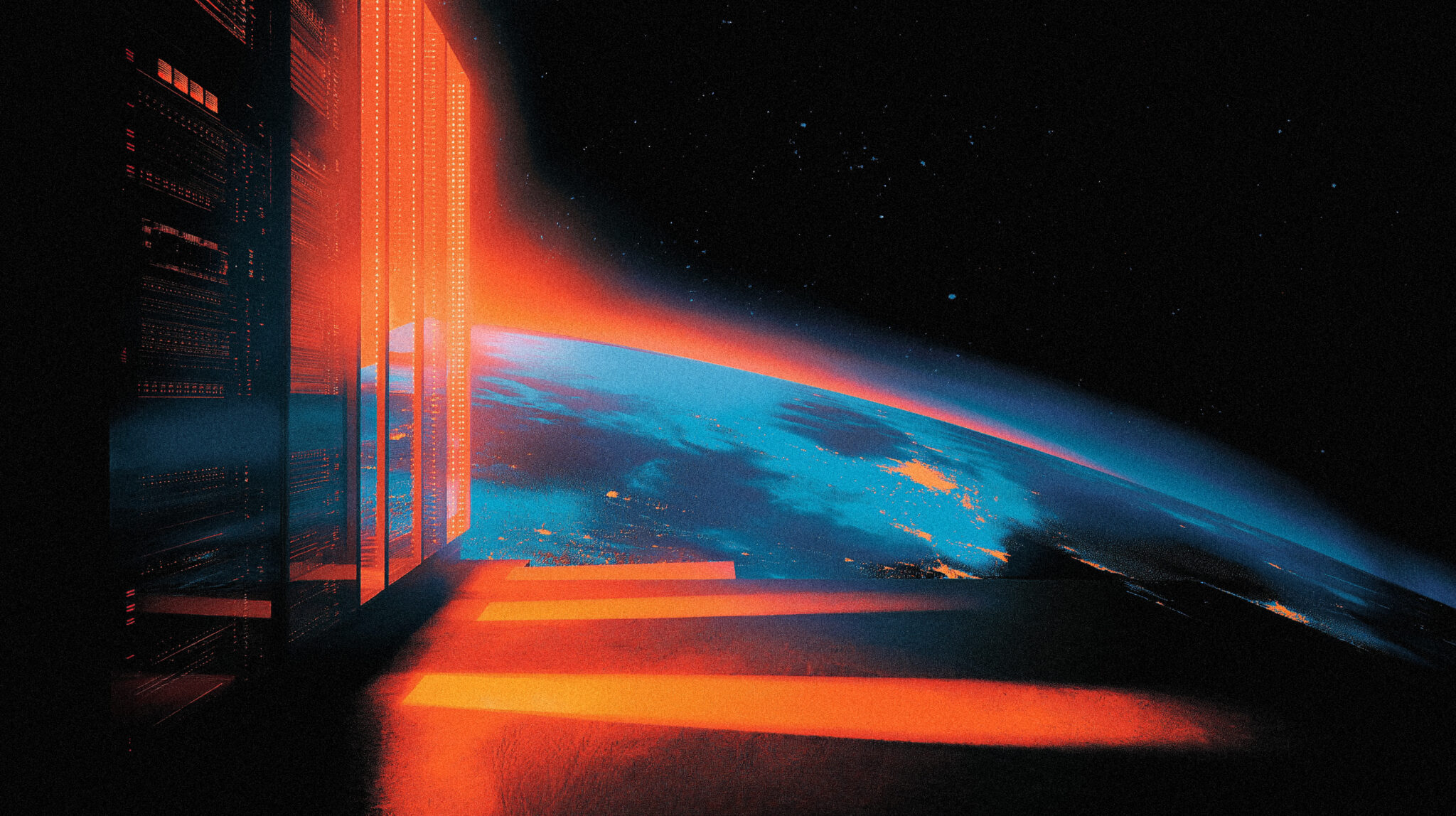

Anthropic, the company Musk called “evil” eight months ago, just signed a deal to lease the entire Colossus 1 data center from SpaceX. As part of the same announcement, both companies said they’re exploring “multiple gigawatts of orbital AI compute capacity.”

Server farms. In space.

Six months ago this would have been a Wired think piece about the year 2035. Now it’s a footnote in a Wednesday press release.

The story most coverage is missing: this isn’t just a compute deal. It’s two companies admitting that Earth doesn’t have enough power to keep training frontier AI models. The orbital data center pitch has been around for years as a thought experiment. Nobody serious was actually planning to do it. Then on Wednesday, Anthropic’s chief compute officer Tom Brown tweeted that “we need to move a lot of atoms” to keep up with AI demand, and the most casual reading is that he means atoms in low Earth orbit.

That’s where we are now.

The Anthropic-SpaceX Deal Is Already Insane

Strip away the orbital part and what’s left is still a story.

Anthropic just agreed to use 100% of the compute at Colossus 1 in Memphis, Tennessee. That’s 300+ megawatts of capacity and over 220,000 Nvidia GPUs (H100s, H200s, and next-gen GB200 accelerators). For context, Colossus 1 was xAI’s flagship facility. Musk built it in record time as the foundation of his Grok ambitions and his pitch that xAI could compete with OpenAI and Anthropic.

Now Anthropic is renting all of it.

Effective today, the deal lets Anthropic double Claude Code’s five-hour rate limits for Pro, Max, Team, and Enterprise plans. They’re also removing the peak hours throttling that paying customers have complained about for months, and they’re significantly raising API rate limits for the Claude Opus models. Real wins for users who’ve been hitting walls.

The deeper context is what makes this weird. Musk has spent the last year publicly calling Anthropic “misanthropic” and “evil.” In February he tweeted that the company “hates Western civilization.” The Pentagon, which has been embracing xAI’s Grok for military applications, declared Anthropic a supply chain risk in March and blacklisted them from defense work. Anthropic is currently suing the Trump administration to reverse that designation. The lawsuit is ongoing.

So the lineup looks like this: Anthropic is suing the federal government over Pentagon decisions that favor Musk’s AI company, while simultaneously leasing all the compute at Musk’s flagship data center.

Musk’s response was almost gracious, which is the most suspicious part of the whole thing. He posted that he spent the past week with senior Anthropic team members and “no one set off my evil detector.” He also added that SpaceXAI reserves the right to reclaim compute if Anthropic’s AI “engages in actions that harm humanity,” which is the kind of clause you put in a contract when you assume your counterparty might still be evil but you need their money.

Also added that SpaceXAI had already moved training to Colossus 2, which raises an obvious question. If Colossus 1 was such great infrastructure, why is it suddenly available for lease? The answer is probably some combination of “Colossus 2 is online” and “we need Anthropic’s money before our IPO in June.” SpaceX filed confidentially for a public listing on April 1 and is targeting a $1.75 trillion to $2 trillion valuation. Adding a named compute customer like Anthropic strengthens the AI infrastructure pitch in their S-1.

This is a deal where everyone needs each other and nobody likes it.

The Anthropic-SpaceX Orbital Compute Pitch

Buried in both announcements is a sentence that should have been the headline.

Anthropic and SpaceX both said they’re exploring partnerships to develop “multiple gigawatts of orbital AI compute capacity.” Tom Brown, Anthropic’s chief compute officer, posted on X that “we need to move a lot of atoms in order to keep up with AI demand, and there’s nobody better at quickly moving atoms (on or off planet Earth).”

To translate: Anthropic and SpaceX are talking about building data centers in orbit and using SpaceX’s Starship rockets to deliver them.

This isn’t a far-future research direction. Musk has been pitching space-based data centers since he merged xAI into SpaceX in February. His argument was that solar energy in space is nearly continuous (no clouds, no atmospheric loss, 24-hour exposure), and that cooling is solved by simply venting heat into the vacuum. The pitch was that as AI compute demand outstripped Earth’s power grid capacity, the only place left to scale was up.

Most people read that pitch as Musk being Musk. Galaxy brain stuff. The kind of thing you say to make headlines while you’re actually busy doing something else.

But Anthropic just signed onto the vision. Officially. In a press release. With actual gigawatts as the unit of measurement.

The unit matters. A gigawatt is one billion watts. The largest power plants on Earth (Three Gorges Dam in China, Kashiwazaki-Kariwa in Japan) produce 7-22 gigawatts of capacity. When two AI companies say they’re exploring “multiple gigawatts of orbital compute,” they’re talking about deploying the energy equivalent of one to several major terrestrial power plants in low Earth orbit.

That’s not a research experiment. That’s an admission that Earth’s power infrastructure can’t keep up with AI training demand and the next decade of AI scaling will happen somewhere besides Earth.

The Power Problem Is Now Public

This is where the story connects to a bigger trend nobody wants to admit out loud.

The US power grid is already buckling under AI data center demand. In the PJM grid region (covering the Mid-Atlantic), wholesale power supply costs jumped from $2.2 billion to $14.7 billion in a single year. Data centers accounted for nearly two-thirds of that increase. Residential electricity rates have risen about 32% nationally over the last five years. Senator Bernie Sanders and Rep. Alexandria Ocasio-Cortez introduced the AI Data Center Moratorium Act in March, with the bill peaking in public debate this week. Over 100 local communities have already passed their own moratoriums on new data center construction.

Anthropic’s compute partnerships read differently when you have that context.

Their April deal with Google for $40 billion in compute included 5 GW of capacity coming online in 2027. They have a separate 5 GW agreement with Amazon, with nearly 1 GW of new capacity by year-end. There’s a Microsoft-NVIDIA partnership covering $30 billion of Azure capacity. A $50 billion infrastructure investment with Fluidstack. And now SpaceX for another 300+ MW, with the orbital pitch on top.

Add up the announced compute commitments across just Anthropic’s major deals and you’re looking at roughly 15+ gigawatts of capacity by 2027-2028. That’s more than the entire installed nuclear capacity of countries like the UK or France. For one AI company.

OpenAI, who closed a $122B round in April with Amazon, Nvidia, SoftBank, and Microsoft, is doing roughly the same thing on the same scale. Same with Google. Same with Microsoft’s own internal AI infrastructure.

The math doesn’t work on Earth. There’s not enough grid capacity, not enough power generation, not enough land near population centers, not enough water for cooling at most viable sites. Local moratoriums are accelerating. Regulatory pushback is real.

Hence: orbital compute. Not because it’s elegant. Because it’s the only direction left with no zoning board.

What This Means For Users (Short Term)

If you pay for Claude Pro or Claude Max, today’s the day you should notice things working better.

Doubled rate limits on Claude Code. No more peak hour throttling on the consumer plans. Higher API limits on Opus models. The infrastructure boost is real and effective immediately. If you’ve been hitting “you’ve reached your limit, please wait” messages on Claude Code, those should be substantially rarer for the next several months.

For everyone else, the takeaway is more subtle. Anthropic just removed one of its biggest user complaints (rate limits) by leasing 220,000 GPUs from a company whose CEO publicly called Anthropic evil. That’s how desperate the compute situation is. If you’re paying for AI tools right now, you’re paying for capacity that doesn’t really exist yet. Companies are stitching together infrastructure deals with whoever has GPUs and praying it lasts long enough to ship the next model.

If you’ve been considering upgrading from free to paid, this is the kind of moment where the paid tier actually delivers what the marketing says it does. Briefly.

What This Means Long Term

The orbital pitch isn’t going to ship in 2026. Probably not in 2027 either.

But the conversation has shifted. A year ago, the AI scaling debate was about whether models would keep getting better, whether scaling laws would hold, whether the bubble would pop. The conversation now assumes models keep getting better and scaling keeps working, and the actual debate is whether Earth’s physical infrastructure can keep up.

It can’t. Multiple AI companies are openly saying so by signing infrastructure deals at scales that don’t fit on the grid. The US is already running out of grid capacity in key regions. Communities are passing moratoriums. Power costs are spiking. Water is becoming a bottleneck. The next round of AI data centers is going to be built somewhere with cheap power and weak regulation, which means the Middle East, parts of Africa, or eventually space.

The Anthropic-SpaceX deal is the moment those conversations stopped being theoretical. Two of the most important AI companies on the planet just signed paperwork that includes “orbital AI compute capacity” as a real line item. Tom Brown specifically called out moving atoms “on or off planet Earth.” That’s official corporate language. Not Musk shitposting. Not a research paper. A binding partnership announcement.

The next time you read about an AI model being delayed, ask whether the bottleneck is the model or the megawatts. Increasingly, it’s going to be the megawatts. And the answer is going to involve rockets.

The Pentagon Irony Nobody’s Mentioning

There’s one more layer to this that most coverage skipped.

Anthropic was declared a supply chain risk by the Pentagon in March and blacklisted from defense work. The Pentagon has been embracing xAI’s Grok for military applications. Anthropic sued the Trump administration over the designation in San Francisco and Washington, and that lawsuit is still active.

Now Anthropic is leasing all the compute at xAI’s former flagship data center, while xAI itself dissolves as a separate entity and gets folded into SpaceX. The federal government picked Musk’s AI company. Musk’s AI company just sublet its primary infrastructure to Anthropic. Anthropic is suing the federal government. Everyone keeps doing business with everyone else.

This is what concentration looks like up close. The frontier AI industry is roughly five companies trading compute, GPUs, and lawsuits with each other in increasingly creative arrangements while the rest of the world tries to figure out where to build the next 100 megawatts of power generation. The disagreements are real but the dependencies are deeper.

Musk reserving the right to reclaim Colossus 1 if Anthropic “harms humanity” is the most honest line in the entire announcement. Translation: I think you might be evil but I’m taking your money anyway, and if it turns out I was right, I’m pulling the plug.

Welcome to the AI infrastructure economy.