GoGGoogle sent Anthropic $10 billion last Friday.

Anthropic is going to send most of it back.

That’s not a joke. That’s the deal.

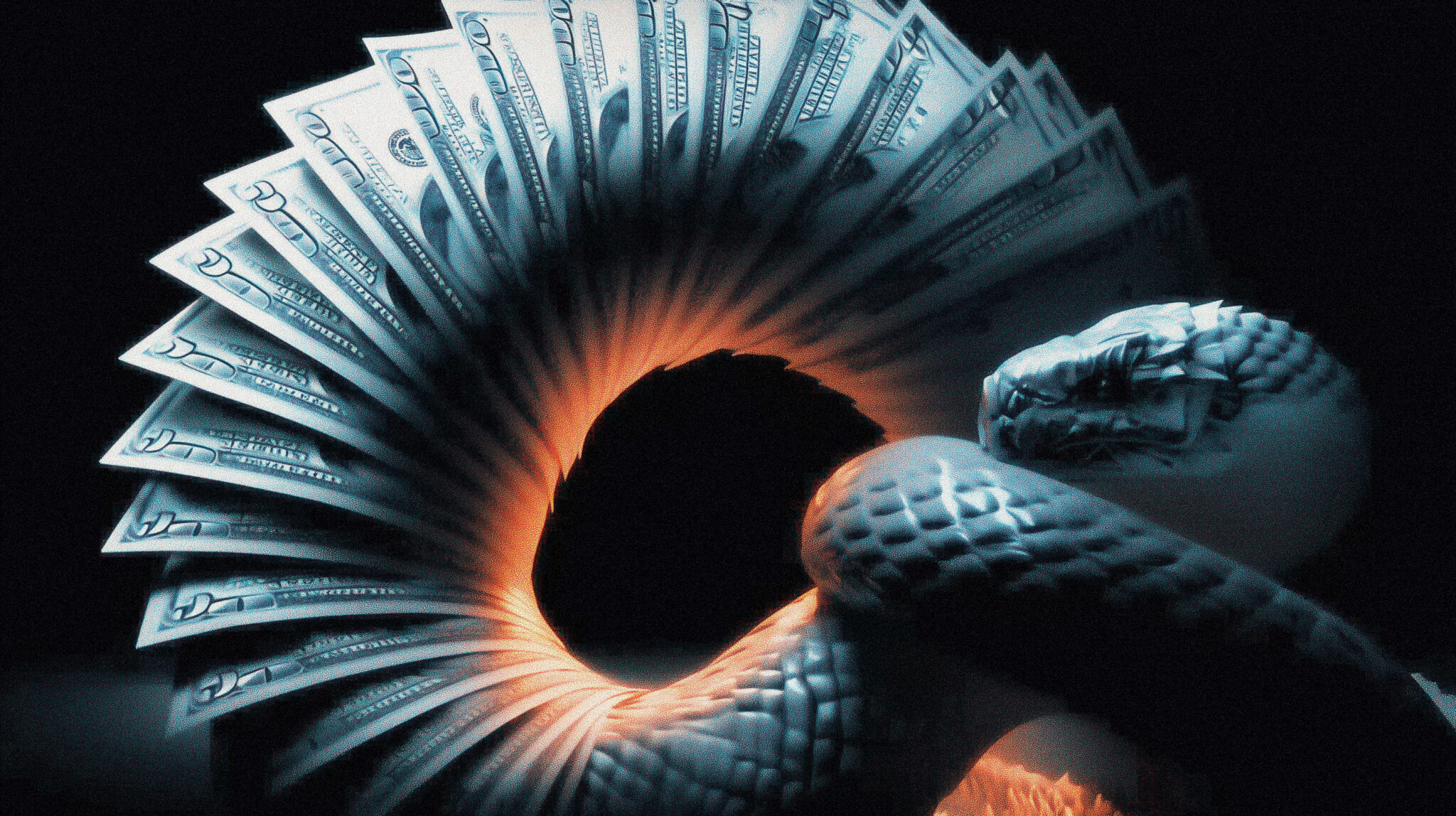

The Google Anthropic investment announcement clocked in at up to $40 billion total. The first $10 billion landed Friday at a $350 billion valuation. The remaining $30 billion is contingent on performance milestones. As part of the same announcement, Anthropic committed to using 5 gigawatts of Google’s TPU compute capacity over the next five years. Per TechCrunch’s reporting on the deal, Anthropic pays Google for that compute. So when you actually trace the dollars, what looks like a venture investment is closer to a cloud contract dressed in a different wardrobe. Google buys equity in Anthropic. Anthropic buys TPUs from Google. Same balance sheet. Two press releases. Stock goes up.

And the most interesting part is that Microsoft figured this out three years ago.

The Google Anthropic Investment Loop Has a Cap Table Now

Microsoft put $13 billion into OpenAI. OpenAI runs on Azure. OpenAI sends most of its compute spend back to Microsoft. Microsoft books that revenue, the AI division shows growth, the stock benefits. The capital flowed in a circle and everyone involved came out richer than they started.

Google watched that happen and decided to do the same thing with Anthropic.

The structure is almost mechanical. Anthropic’s training stack runs predominantly on Google’s TPUs (with Amazon’s Trainium chips as a backup). That’s not an accident, it’s an architectural commitment. Anthropic can’t just pull up stakes and switch to Nvidia in a weekend. Their inference stack, their kernels, their performance tuning are all optimized for TPU workloads. Switching providers would mean rebuilding for months.

So when Google extends $10 billion in equity and 5 gigawatts of TPU capacity, both sides know exactly how this plays out. The cash flows back. Google gets a venture stake AND a multi-year cloud customer at scale. Anthropic gets the compute it physically needs to ship Claude 5 Opus and stay in the frontier model race.

It’s a closed loop. And the closed loop is getting tighter.

The Google Anthropic Investment Math Is Insane

To understand why this matters, you have to look at what Anthropic just stacked up in roughly three weeks.

April 6: Anthropic announces a deal with Google and Broadcom for 3.5 gigawatts of TPU capacity starting in 2027.

Mid-April: Amazon expands its Anthropic investment to up to $25 billion in committed capital, tied to commercial milestones.

April 24: Google commits up to $40 billion plus an additional 5 gigawatts of TPU capacity over five years.

By the end of April, Anthropic has roughly $65 billion in pledged equity capital and 10 gigawatts of reserved compute. For context, that’s enough power to run somewhere between 7 and 10 million American homes. Anthropic is going to use it to train and serve large language models. The Google Anthropic investment is the largest single chunk of that stack, but it doesn’t sit alone.

The annualized revenue numbers are equally absurd. Anthropic was at $1 billion in revenue at the end of 2024. $9 billion by the end of 2025. $30 billion as of early April 2026. That kind of curve doesn’t show up in normal businesses. It shows up when you’re selling something that didn’t exist 18 months ago to enterprises that suddenly have an existential reason to buy it.

Claude is in production at most of the Fortune 500 right now. Cursor runs Opus 4.7 as the default coding model. Hex, Rakuten, Notion, GitLab, all the rest. Anthropic isn’t just shipping models, it’s running infrastructure for a meaningful slice of corporate America’s AI workloads. The same enterprise pull is what’s driving the agent stack rebuild that’s replacing traditional automation tools, and every one of those workloads burns compute.

The Microsoft Comparison Isn’t Flattering for Anyone

Microsoft’s OpenAI deal was the proof of concept for this whole structure. Invest in the model lab, lock in the cloud relationship, book the revenue, watch the stock climb. It worked. OpenAI’s compute bill at Microsoft is reportedly massive. Microsoft’s Azure AI revenue is one of the headline numbers it leans on every earnings call.

Google watched and learned. The Anthropic deal is the same template, just larger.

Amazon then did its own version. AWS has its own custom AI chip (Trainium), and Anthropic has been quietly using it as a secondary training substrate. Amazon’s $25 billion commitment is contingent on commercial milestones, which is finance-speak for “we’ll write the check when you keep buying our compute.”

That leaves three of the largest companies in the world all essentially funding their own cloud customers. Microsoft funds OpenAI which buys Azure. Google funds Anthropic which buys TPUs. Amazon funds Anthropic which buys Trainium and AWS.

Notice that Anthropic appears twice in that list. They have two of the three hyperscalers as both investors and infrastructure suppliers. That’s either brilliant negotiation or a hostage situation, depending on how you look at it.

What This Means for Everyone Who Isn’t an Anthropic Investor

For the average person paying $20 a month for Claude Pro, this looks like good news on the surface. More compute means better models. Better models mean Opus 4.7 stays sharp and Claude 5 ships on schedule.

But the second-order effects are uglier.

When the same handful of companies fund all the frontier AI labs AND supply all the infrastructure those labs need to compete, what looks like a vibrant market is actually three companies playing pickleball with the future of AI. The independent AI lab essentially doesn’t exist anymore. There’s no path to building a frontier model without billions in compute, and there’s no path to billions in compute without partnering with one of the hyperscalers, and once you partner with a hyperscaler you become structurally dependent on them.

DeepSeek is the obvious counterexample, training V4 for an estimated $5 million on a quarter of the compute their American competitors burn through. But DeepSeek isn’t operating in the same market. Open weights, Chinese government context, no enterprise sales motion. American enterprises aren’t deploying DeepSeek to production for reasons that have nothing to do with the model and everything to do with regulatory exposure.

So the field of “American frontier AI labs” is now three names, and all three are partially owned by hyperscalers who also sell them their compute. That’s not a market. That’s a tournament where the prize is access to the next round.

The Part Nobody on CNBC Mentions

Greg Brockman keeps showing up on press tours saying GPT-5.5 is “a step toward more agentic computing.” Sundar Pichai keeps saying Gemini 3.1 Pro is leading on benchmarks. Dario Amodei keeps saying Anthropic is responsibly scaling toward safer superintelligent AI.

What they’re all also doing is buying compute from each other.

Sam Altman recently criticized Anthropic publicly for not securing enough compute, per CNBC’s coverage of the OpenAI-Microsoft restructuring, which is a wild quote in the context of what was already happening behind the scenes. OpenAI’s own compute scramble has them in deals with Cerebras, multiple cloud providers, and the largest financial commitments to chip suppliers in the history of the technology industry. None of these companies have a comfortable margin of compute headroom right now. They’re all bidding against each other for the same finite supply of GPUs and custom silicon, and the bidding war is what’s driving these gigantic equity-and-compute deals.

The actual product (a more capable AI model) is a side effect of the much larger transaction (a hyperscaler buying themselves a guaranteed AI customer for the next decade).

What Happens Next Is Already Visible

Anthropic is widely reported to be targeting an IPO as early as October 2026. That’s six months from now. The compute deal Google just announced, plus the Amazon expansion, plus the existing Broadcom arrangement, are all about making the IPO story tell well to public market investors.

The story is going to be: Anthropic has secured a decade of compute access, has multiple billion-dollar enterprise customers, is generating $30 billion in annualized revenue, and is the only American frontier model company that’s not single-vendor dependent on Nvidia.

That’s a real story. It’s also a story that depends entirely on the hyperscalers continuing to want this arrangement. Google could decide tomorrow that Gemini is winning enough to deprioritize the Anthropic relationship. Amazon could decide Trainium is mature enough to compete with Nvidia directly without needing a flagship customer. Either move would be catastrophic for Anthropic’s compute access, and Anthropic has no way to hedge against that risk because there are only three hyperscalers in the world who can supply the compute it needs.

So the calmest framing of where AI sits in 2026 is this: three labs, three hyperscalers, and a circular cash flow that benefits all six entities at the expense of competition.

The model wars feel competitive because the press releases are loud. The compute wars are louder, and the press releases are quieter.

The Honest Read

If you’re a regular person trying to figure out which AI tool to pay for, none of this changes much. ChatGPT, Claude, and Gemini are still the three serious options, and the right pick depends on what you’re trying to do, not on whose investor balance sheet looks healthier this quarter. The Google Anthropic investment doesn’t make Claude any better today, it just makes sure Claude can keep getting better tomorrow.

But if you’re trying to understand why every flagship model is getting better at the exact same pace, why the pricing keeps drifting upward despite “unchanged rate cards,” and why every AI lab announcement now comes paired with a compute deal announcement, the answer is in the cap tables.

Google sent Anthropic $10 billion last Friday. Anthropic is going to send most of it back. And next quarter, both companies are going to report record numbers, and the AI press is going to call it healthy market dynamics.

The dollar isn’t moving. It’s just doing laps.