Google showed a brief demo of AI smart glasses with a HUD I/O 2024.

The pre-recorded demo was shown as part of Google’s announcement of Project Astra, an in-development real time natively multimodal AI assistant that can remember and reason about everything it sees and hears, as opposed to current multimodal AI systems which capture a image on-demand when asked about what they see. Google says Astra works by “continuously encoding video frames, combining the video and speech input into a timeline of events, and caching this information for efficient recall”.

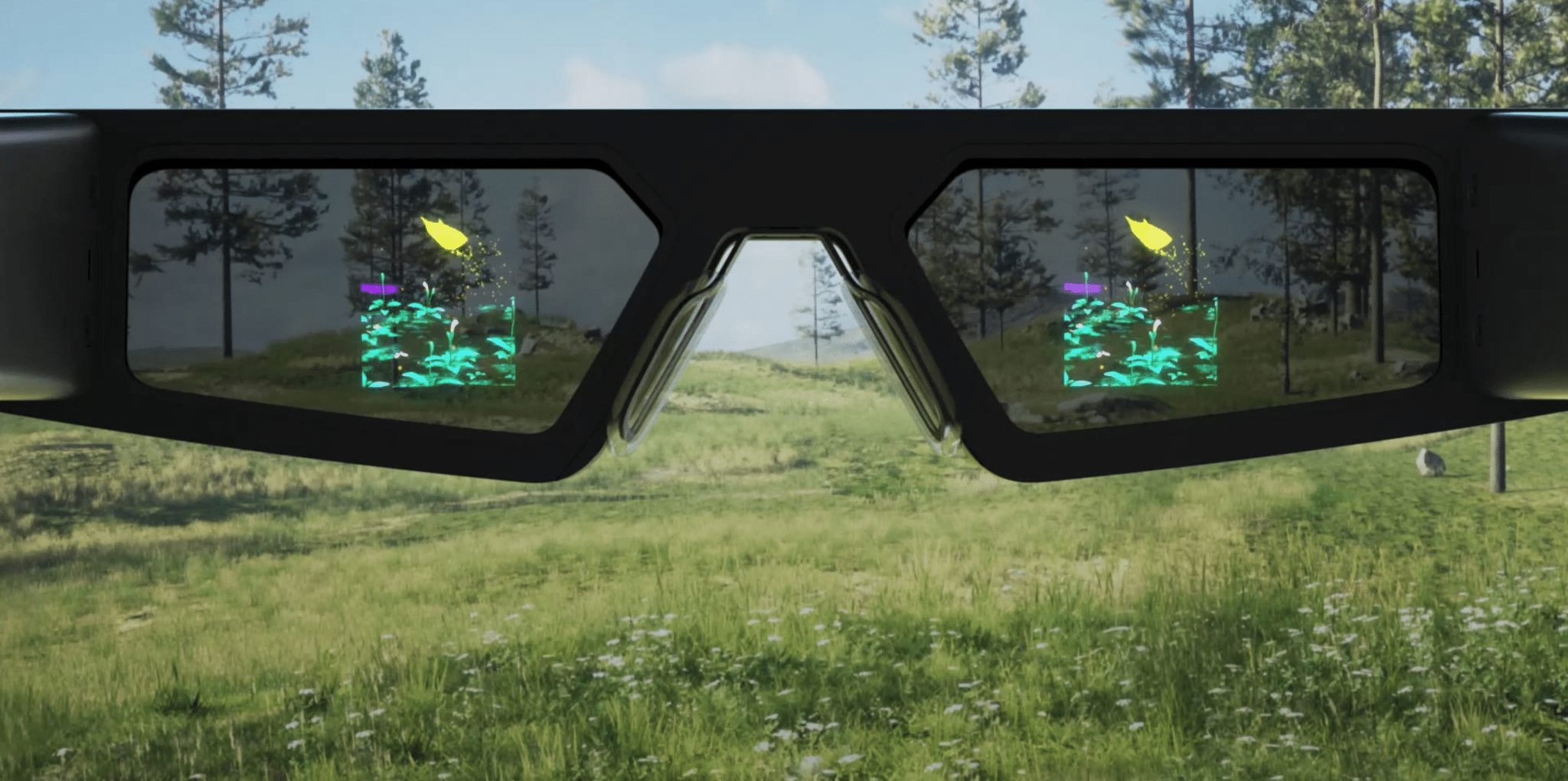

The Project Astra demo video starts on a smartphone, but halfway through the user picks up and puts on a thick pair of glasses.

0:00

The smart glasses teaser segment of Google I/O 2024.

These smart glasses were shown to have a fixed heads-up display (HUD) with a blue audio input indicator showing when the wearer is speaking and white text showing the AI’s response.

Google Deepmind CEO Demis Hassabis said “new exciting form factors like glasses” were “easy to envision” as an endpoint for Project Astra, but no specific product announcement was made, and a disclaimer reading “Prototype Shown’ appeared at the bottom of the clip near the end.

What we didn’t see in the Google I/O 2024 keynote was any mention of Android XR, the spatial computing platform the company is working on for Samsung’s upcoming headset. Google may be waiting to let Samsung handle the announcement later this year.

Meta is reportedly planning to bring a HUD to the next generation of Ray-Ban Meta smart glasses in 2025. Will Google compete with this directly, or does it plan to provide Project Astra to third-party hardware makers instead?